What is Exploratory Data Analysis? Exploratory data analysis (EDA) is used by data scientists to analyze data sets and summarize their main characteristics and understand its main features, find patterns and discover how different parts of the data are connected. often employing data visualization methods.

What is Exploratory Data Analysis?

Exploratory Data Analysis [EDA] is primarily used to see what data can reveal beyond the formal modeling or hypothesis testing task and provides a provides a better understanding of data set variables and the relationships between them. It can also help determine if the statistical techniques you are considering for data analysis are appropriate. Originally developed by American mathematician John Tukey in the 1970s, EDA techniques continue to be a widely used method in the data discovery process today.

Why Exploratory Data Analysis Important:

- The main purpose of EDA is to help look at data before making any assumptions. It can help identify obvious errors, as well as better understand patterns within the data, detect outliers or anomalous events, find interesting relations among the variables.

- One can expect to find data patterns, outliers, and possible correlations between variables.

- Helps to understand the dataset by showing how many features it has, what type of data each feature contains and how the data is distributed.

- EDA helps in the identification of data quality concerns and missing values, allowing for data cleaning and preparation. Visualization approaches aid in hypothesis formation by providing a graphical comprehension of data distributions.

- Helps to identify hidden patterns and relationships between different data points which help us in and model building.

Allows to identify errors or unusual data points (outliers) that could affect our results. - EDA provides context for subsequent modeling by highlighting potential features and helps in guiding model selection.

- The insights gained from EDA help us to identify most important features for building models and guide us on how to prepare them for better performance.

- By understanding the data it helps us in choosing best modeling techniques and adjusting them for better results.

Types of Exploratory Data Analysis:

There are four primary types of EDA:

- Univariate Exploratory Data Analysis

- Bivariate Analysis

- Multivariate Exploratory Data Analysis

Univariate Exploratory Data Analysis:

A type of analysis in which you study one variable or observation at a time to understand its distribution, central tendency, and variability. For example of this type of EDA, focusing on product sales to understand which product performs better requires examining sales figures independently of other factors.

# Histogram of a single variable

sns.histplot(df[‘column_name’], kde=True)

plt.show()

# Boxplot for detecting outliers

sns.boxplot(x=df[‘column_name’])

plt.show()

Graphical Methods: histograms, box plots, density plots, violin plots, Q-Q plots

Non-Graphical Methods: mean, median, mode, dispersion measures, percentiles, skewness, kurtosis

Bivariate Analysis:

Bivariate Analysis focuses on identifying relationship between two variables to find connections, correlations and dependencies. It helps to understand how two variables interact or depended with each other, like:

Scatter plots which visualize the relationship between two continuous variables.

Correlation coefficient measures how strongly two variables are related which commonly use Pearson’s correlation (Pearson’s correlation measures the strength and direction of a linear relationship between two continuous numerical variables.)for linear relationships.

Cross-tabulation or contingency tables shows the frequency distribution of two categorical variables and help to understand their relationship.

Line graphs are useful for comparing two variables over time in time series data to identify trends or patterns.

Covariance measures how two variables change together but it is paired with the correlation coefficient (The correlation coefficient measures the strength and direction of the relationship between two variables) for a clearer and more standardized understanding of the relationship.

# Scatter plot for two continuous variables

sns.scatterplot(x=df[‘column_x’], y=df[‘column_y’])

plt.show()

# Correlation heatmap

plt.figure(figsize=(10,8))

sns.heatmap(df.corr(), annot=True, cmap=’coolwarm’)

plt.show()

# Boxplot to compare categorical and continuous variables

sns.boxplot(x=df[‘categorical_column’], y=df[‘numerical_column’])

plt.show()

Multivariate Exploratory Data Analysis:

Multivariate analysis helps analyze and understand complex relationships between three or more variables simultaneously. For example, exploring the relationship between a person’s height, weight, age, and health outcomes requires sophisticated analytical techniques to identify meaningful patterns.

Graphical Methods: scatter-plot matrices, heat maps, parallel-coordinates plots, dimensionality-reduction visualizations

Non-Graphical Methods: multiple regression, factor analysis, cluster analysis, principal component analysis

# Pair plot to visualize relationships between multiple variables

sns.pairplot(df)

plt.show()

# Grouped bar plot for categorical variables

sns.countplot(x=’categorical_column_1′, hue=’categorical_column_2′, data=df)

plt.show()

Tools and Libraries for EDA:

Python-Based Automated Libraries

Some of the most popular Python libraries used for exploratory data analysis (EDA) include Pandas, NumPy, Matplotlib, and Seaborn.

Pandas

Pandas is an essential library for data manipulation and cleaning in Python. It provides data structures like Series and DataFrame that allow users to easily load, clean, and manipulate data. Pandas can be used to perform summary statistics and basic data wrangling tasks like filtering, sorting, and handling missing values. For example, we can use Pandas’ .describe() method to get basic statistics of each column in our dataset with just one line of code.

df[‘marks’].mean()

df[‘marks’].median()

df[‘marks’].value_counts()

df.corr(numeric_only=True)

df.groupby([‘gender’, ‘attendance’])[‘marks’].mean()

NumPy:

NumPy is the fundamental package for scientific computing in Python. It adds support for large multi-dimensional arrays and matrices alongside a vast collection of high-level mathematical functions to operate on these arrays. NumPy is commonly used alongside Pandas for numeric computations during EDA.

import numpy as np

marks = np.array([45, 50, 60, 70, 85, 90, 100, 30, 55])

np.mean(marks)

np.median(marks)

np.std(marks)

np.var(marks)

np.min(marks)

np.max(marks)

Q1, Q3 = np.percentile(marks, [25, 75])

IQR = Q3 – Q1

outliers = marks[(marks < Q1 – 1.5*IQR) |

(marks > Q3 + 1.5*IQR)]

np.sort(marks)

np.argsort(marks)

Matplotlib:

Matplotlib is a comprehensive library for creating static, animated, and interactive visualizations. It allows for generating common plots like line plots, bar charts, histograms and scatter plots with just a few lines of code. Matplotlib forms the basis for more advanced and aesthetic visualization libraries in Python.

plt.hist(marks)

plt.xlabel(“Marks”)

plt.ylabel(“Frequency”)

plt.title(“Distribution of Marks”)

plt.show()

Seaborn:

Seaborn is a data visualization library built on top of Matplotlib. It provides a high-level interface for drawing beautiful and informative statistical graphics. Seaborn makes it easy to generate common plots found in statistical data analysis like joint and marginal distributions, correlation plots, nested violin plots, and heatmaps. It is commonly used for exploratory analysis to detect patterns and relationships in the data.

sns.histplot(df[‘marks’], kde=True)

sns.lineplot(x=’study_hours’, y=’marks’, data=df)

sns.scatterplot(

x=’study_hours’,

y=’marks’,

hue=’gender’,

data=df

)

R Packages:

Some key R packages used for exploratory data analysis include ggplot2, dplyr, tidyr, and plotly.

ggplot2:

ggplot2 is one of the most popular data visualization packages for R. It provides a powerful and flexible grammar of graphics to create complex multi-layered plots. ggplot2 makes it easy to visualize univariate, bivariate and multivariate data using functions like qplot() and ggplot().

dplyr:

dplyr is a very useful package for data manipulation and wrangling tasks in R. It contains fast versions of common data operations like filtering, sorting, transforming and joining operations to easily manipulate data frames. Some examples include grouping/summarizing data using group_by() + summarize() or selecting specific columns using select().

tidyr:

tidyr is another essential tool for data tidying and reshaping. It contains functions to pivot tables for switching between wide and long data formats. This can help flatten messy datasets for downstream analysis.

plotly:

plotly can be used to create interactive plots, dashboards and web applications directly from R. It allows for building dynamic and publication-quality graphs for exploratory analysis, interactive reports and dashboards.

Choosing the right library based on analysis needs is important for effective EDA.

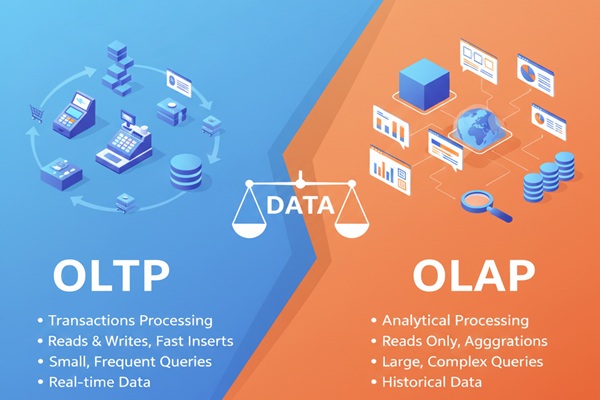

How Does Exploratory Data Analysis Differ From Other Types of Data Analysis?

Exploratory data analysis is the first step to initiate advanced data analysis techniques such as predictive analysis, sentiment analysis, or cluster analysis. The key purpose of data exploration is to get a quick overview of its patterns and errors to produce accurate results in further data analysis stages.

Contrary to this are other data analysis techniques, which are used to get specific insights. For example, sentiment analysis is a technique to assess the tone or sentiment behind a text or audio. This is beneficial in the customer support industry, where businesses use it to analyze customers’ sentiments and helps organizations provide better customer support.

| Type | Goal | Nature | Example Question |

|---|---|---|---|

| EDA | Understand data | Open-ended | What patterns exist? |

| Descriptive | Summarize | Past-focused | What happened? |

| Diagnostic | Explain | Cause-focused | Why did it happen? |

| Confirmatory | Validate | Hypothesis-driven | Is it significant? |

| Predictive | Predict | Future-focused | What will happen? |

| Prescriptive | Recommend | Action-oriented | What should we do? |

Similarly, predictive analysis is another excellent technique for making predictions or forecasts. This analysis involves analyzing historical data to forecast future trends. For example, organizations launching new products in the market can use predictive analysis to predict consumer demand and forecast their revenue.

Even though exploratory data analysis and other techniques help organizations to make data-driven decisions, the key difference is their use case. The primary purpose of exploratory data analysis is to manipulate data for more effective results. However, other data analysis techniques, as elaborated above, offer specific benefits.

How Can You Perform Exploratory Data Analysis?

Modern EDA follows a systematic eight-step process that combines traditional statistical rigor with contemporary automated techniques to ensure comprehensive data understanding.

Data Collection – Gather relevant data from assorted sources, ensuring quality and completeness with automated schema validation against domain-specific data contracts.

Inspecting the Data Types– Identify critical variables, data types, missing values, and initial distributions using AI-powered profiling tools that highlight potential quality issues.

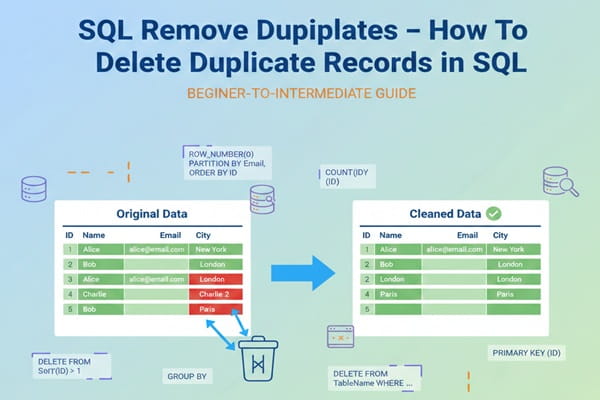

Data Cleansing – Remedy errors, inconsistencies, and duplicates while preserving data integrity through systematic transformation tracking and statistical justification documentation.

| Tool | Purpose |

|---|---|

| NumPy | Numerical ops |

| Pandas | Data handling |

| Matplotlib | Basic plots |

| Seaborn | Statistical plots |

| SQL | Data extraction |

Identifying Patterns and Correlations – Visualize datasets using different data visualization tools with automated chart selection based on variable characteristics.

Performing Descriptive Statistics – Calculate measures of central tendency, variability, and distribution characteristics with automated hypothesis generation for analyst validation.

Perform Advanced Analysis – Apply multivariate techniques and ML approaches to gain deeper insights, incorporating specialized methods for temporal, spatial, and unstructured data.

Interpret Data – Generate insights within the appropriate business context using AI-assisted narrative generation that explains statistical patterns in business terms.

Document and Report – Record steps, techniques, and findings for stakeholders through reproducible notebooks with version-controlled dependencies and comprehensive operation logs.

What Are the Challenges of Exploratory Data Analysis?

Data Unification and Integration Complexity

Reconciling inconsistent data models from multiple sources complicates EDA preparation, requiring sophisticated schema mapping and transformation capabilities that preserve analytical context while enabling cross-system pattern detection.

Data Quality and Reliability Issues

Inconsistencies, missing values, outliers, and measurement errors can lead to flawed conclusions if not systematically addressed through comprehensive quality assessment frameworks and statistical validation procedures.

Scalability and Performance Limitations

High-volume datasets strain traditional EDA methods, preventing real-time analysis and requiring distributed computing frameworks that maintain interactive responsiveness while processing terabyte-scale information.

| Challenge | Description |

|---|---|

| Data quality | Missing, noisy, inconsistent data |

| Scale | Big & high-dimensional datasets |

| Outliers | Hard to classify & handle |

| Complexity | Non-linear relationships |

| Visualization | Misleading or cluttered charts |

| Bias | Subjective interpretation |

| Time | Iterative and slow |

| Privacy | Restricted data usage |

Security and Privacy Concerns

Sensitive data introduces risks of unauthorized access and compliance violations, necessitating advanced governance frameworks that enable exploration while protecting individual privacy and meeting regulatory requirements.

Subjectivity and Cognitive Bias

Human interpretation variants can introduce confirmation bias and pattern overfitting, requiring systematic validation mechanisms and collaborative review processes that maintain analytical objectivity.

Data Consistency and Versioning

Maintaining reproducibility while datasets evolve requires sophisticated version control systems that track both data evolution and analytical progression throughout exploration workflows.

High-Dimensional Data Complexity

The curse of dimensionality demands specialized algorithms and mathematical techniques that can identify meaningful patterns in complex feature spaces while avoiding spurious correlations.

Addressing these challenges requires adopting best practices for smooth data integration and systematic data-quality management through comprehensive governance frameworks.